Exploring Responsible Tech in Testing: Ethical Frameworks and Real-World Implications

The article was originally published in Lambdatest's blog here.

In the rapidly evolving landscape of technology, responsible tech practices are gaining significant importance. Ethical considerations, accountability, and the impact of technology on various stakeholders have become crucial aspects of software development. This article delves into the principles and practices of implementing responsible tech in testing, aiming to create a better, fairer, and more inclusive technological future. From data privacy to bias detection and mitigation, transparency to collaboration, these principles guide testers to ensure that technology is developed and tested ethically and responsibly.

Understanding Responsible Tech in Testing

In the realm of responsible tech in testing, it is crucial to grasp the concept of ethical frameworks and their significance. Ethical frameworks provide a set of principles and guidelines that shape the decision-making process and actions of testers. These frameworks help ensure that technology is developed, tested, and deployed in an ethical and responsible manner.

Failing to consider ethical frameworks while creating a testing strategy can lead to adverse consequences. Real-world examples demonstrate the importance of ethical considerations in testing. For instance, the Cambridge Analytica scandal highlighted the lack of proper ethical framework implementation, resulting in the unauthorized collection and misuse of personal data from Facebook users. This breach of ethical principles not only eroded user trust but also raised concerns about the potential manipulation of personal information for political purposes.

Fairness is a fundamental principle within responsible tech in testing. It refers to the impartial treatment of individuals and the absence of biases or discrimination in the development and deployment of technology. Real-world examples shed light on the consequences of disregarding fairness. The use of biased algorithms in facial recognition systems has been a prominent issue, as these systems have shown significant disparities in accuracy across different racial and ethnic groups. This lack of fairness perpetuates discrimination and amplifies existing societal biases.

Inclusivity is another vital aspect of responsible tech in testing. It entails ensuring that technology is accessible and usable by all individuals, regardless of their abilities, language, or cultural background. Neglecting inclusivity can have profound real-world implications. For instance, if a website or application is not designed and tested with accessibility features in mind, it can exclude individuals with disabilities from accessing important services or information. This exclusionary approach not only violates ethical principles but also hampers the ability of individuals to participate fully in society.

By understanding the importance of ethical frameworks, fairness, and inclusivity, testers can actively work towards creating responsible technology. Integrating these principles into testing strategies enables identifying and mitigating potential ethical pitfalls, promotes unbiased decision-making, and ensures that technology is accessible to all. Responsible tech in testing goes beyond technical proficiency; it emphasizes the ethical and social dimensions of technology, ultimately shaping a more equitable and inclusive digital future.

Ensuring Test Data Privacy

Ensuring test data privacy is a critical aspect of responsible tech in testing. It involves safeguarding personal and sensitive data used during testing to protect individuals' privacy and maintain compliance with data protection regulations.

Real-world examples of customer data misuse highlight the importance of test data privacy. Capital One experienced a data breach where the personal information of over 100 million customers was exposed. The breach occurred due to a vulnerability in their testing environment, highlighting the risks associated with improper handling of test data. This incident not only compromised customer privacy but also resulted in significant reputational damage and legal consequences for the organization.

In its Tech Radar, ThoughtWorks highlighted the possible risks connected to the availability of production data in test settings. This technique has resulted in detrimental situations, such as the unintentional distribution of false notifications to the entire client population. Additionally, test systems frequently have less severe privacy protections, which can be a serious security risk. Even with the use of obfuscation methods for delicate fields like credit card information, moving production data to test databases is still considered an invasion of privacy. This is especially difficult in sophisticated cloud installations where test systems are hosted or accessed from several regions.

Thoughtworks advises caution when replicating certain components of production data, such as for reproducing issues or training machine learning models, and highlights the safer method of using synthetic or false data in light of these worries. Organizations can reduce potential risks and guarantee proper data management throughout the testing process by keeping these factors in mind.

Furthermore, organizations are encouraged to be mindful of the usage of production data in lower environments. It is crucial to evaluate and limit access to production data to prevent any inadvertent exposure or misuse. Organizations should implement strict access controls and encryption mechanisms to safeguard the privacy of customer data during testing.

By adhering to these guidelines and practices, organizations can prioritize test data privacy and minimize the risk of customer data breaches. Thoughtful consideration of test data usage and the implementation of anonymization techniques help balance realistic testing scenarios and protect customer privacy, fostering responsible tech practices in testing.

Addressing Bias in Testing

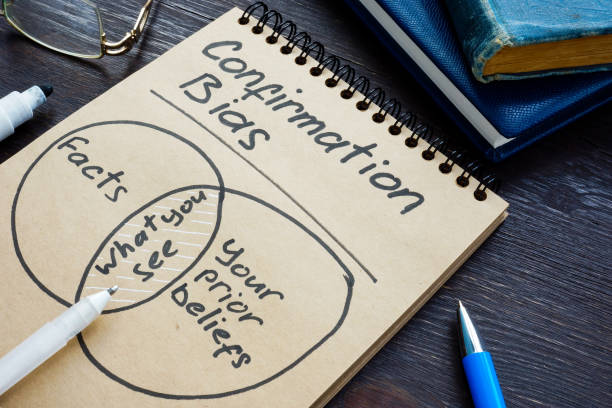

Addressing bias in testing is crucial to ensure fair and unbiased outcomes. Testers, like many individuals, can introduce biases into their testing process and decisions without realizing it. It's essential to be aware of the different types of biases that can occur and take steps to mitigate them.

One common type of bias is confirmation bias, where testers unconsciously seek evidence that confirms their expectations about the software being tested. This can lead to overlooking potential issues and certain outcomes. Testers should actively challenge their assumptions and remain open to all possibilities, diligently seeking evidence that may contradict their initial beliefs.

Another bias is availability bias, which occurs when testers rely heavily on readily available information or examples, rather than considering a broader range of possibilities. This can limit the scope of testing and overlook potential issues that may arise in different scenarios. Testers should consciously expand their perspective, consult diverse sources, and consider a wide range of test cases to mitigate availability bias.

One notable real-world example of biased testing occurred with facial recognition software. Some facial recognition systems exhibited higher error rates when identifying individuals with darker skin tones or women compared to those with lighter skin tones or men. This bias stemmed from the underrepresentation of diverse data sets during the training phase of the software, leading to inaccurate and discriminatory results. To avoid such biases, testers should ensure that the training data sets used in testing are diverse and representative of the target user population.

To address biases during testing, it's crucial to implement measures such as:

Diverse Test Data: Ensure that the test data sets used in testing represent a wide range of demographic groups and characteristics to minimize the risk of biased outcomes.

User-Centric Approach: Adopt a user-centric testing approach, considering the perspectives and needs of various user groups, including marginalized or underrepresented communities.

Peer Reviews and Collaboration: Encourage peer reviews and collaboration among testers to bring diverse perspectives and identify potential biases that an individual tester may overlook.

Continuous Education: Foster a culture of continuous learning and education within the testing team, promoting awareness of biases and providing training on techniques for bias detection and mitigation.

By being vigilant, self-reflective, and proactive in addressing biases, testers can contribute to more inclusive, fair, and unbiased testing processes, ultimately leading to the development of responsible and ethical technology.

Inclusive Testing

Inclusive testing is a vital aspect of responsible tech practices, as it ensures that software is accessible and usable for individuals from diverse backgrounds and abilities. When inclusive testing is not prioritized or done well, organizations can face significant consequences that impact their business.

One real-world incident that highlights the importance of inclusive testing is the case of a popular ride-sharing application. The application was initially designed with limited consideration for individuals with visual impairments. The lack of inclusive testing meant that the app had significant accessibility barriers, making it difficult or impossible for visually impaired users to book rides independently. This resulted in negative user experiences, loss of potential customers, and legal repercussions as the app failed to meet accessibility standards.

In another example, an e-commerce website launched a new feature without conducting thorough inclusive testing. The feature involved a voice recognition system for searching and navigating the site. However, the testing process overlooked the fact that the system struggled to accurately understand accents and dialects other than the dominant language. As a result, users with different language backgrounds faced challenges using the feature effectively. This led to frustration among users, a decrease in user engagement, and ultimately, a loss in business for the organization.

To avoid such incidents, organizations should adopt inclusive testing practices. This involves involving users from diverse backgrounds during the testing process, conducting usability studies, and adhering to accessibility standards. By including individuals with different abilities, languages, and cultural backgrounds in the testing phase, organizations can identify and address potential barriers or biases that may otherwise go unnoticed.

Usability studies play a crucial role in inclusive testing. By observing users interacting with the software and gathering their feedback, organizations can gain insights into potential challenges faced by different user groups. This feedback can then be used to make necessary improvements and ensure a more inclusive and user-friendly experience.

Furthermore, organizations must consider accessibility standards, such as the Web Content Accessibility Guidelines (WCAG), during the testing process. These guidelines provide a comprehensive framework for creating accessible digital experiences. By adhering to these standards, organizations can ensure that their software is usable by individuals with disabilities, including those with visual impairments, hearing impairments, motor disabilities, and cognitive impairments.

Inclusive testing not only enhances the user experience but also fosters a positive brand image, improves customer satisfaction, and opens up new market opportunities. By considering the diverse needs of users and conducting thorough testing, organizations can create software that is truly inclusive and meets the needs of a wider range of individuals.

In conclusion, inclusive testing is essential for creating software that is accessible, usable, and inclusive for all users. Neglecting inclusive testing can lead to negative user experiences, loss of business, and potential legal ramifications. By involving diverse users, conducting usability studies, and adhering to accessibility standards, organizations can ensure that their software embraces inclusivity, caters to diverse user needs, and aligns with responsible tech principles.

Collaborative Testing

Collaborative testing is a crucial aspect of responsible tech practices, as it promotes effective communication, knowledge sharing, and collaboration among multidisciplinary teams. By involving stakeholders from legal, ethics, and user experience domains, organizations can harness diverse perspectives to identify and address ethical concerns more effectively, leading to responsible tech outcomes.

One of the key benefits of collaborative testing is the ability to bring together individuals with different areas of expertise. Legal experts can provide insights into compliance requirements, privacy concerns, and potential legal ramifications. Ethics specialists can offer guidance on ethical considerations, ensuring that the software aligns with moral and societal values. User experience professionals can contribute insights into usability, accessibility, and user-centred design principles.

By incorporating these diverse perspectives, collaborative testing helps to identify potential ethical issues early in the development lifecycle. For example, during the testing process, legal experts may identify data privacy concerns that need to be addressed. Ethics specialists can raise awareness of potential biases or discriminatory outcomes that the software may exhibit. User experience professionals can offer feedback on the usability and inclusivity of the software, ensuring that it caters to a wide range of users.

To perform collaborative testing effectively, organizations can adopt various testing methodologies:

Cross-Functional Testing: This approach involves forming multidisciplinary teams that include members from different domains such as legal, ethics, user experience, development, and quality assurance. They collaborate throughout the testing process, sharing knowledge, exchanging feedback, and collectively addressing ethical considerations.

User-Centred Design Testing: This methodology focuses on involving end-users throughout the testing process. By conducting user research, gathering user feedback, and incorporating user testing sessions, organizations can ensure that the software meets the needs and expectations of the target users. Collaborating with user experience professionals and involving end-users fosters responsible tech practices by prioritizing user-centricity and inclusivity.

Ethical Hacking and Red Teaming: These approaches involve intentionally attempting to exploit vulnerabilities in the software to identify security and ethical risks. By bringing together ethical hackers, security experts, and testers, organizations can perform rigorous assessments to uncover potential weaknesses and address them collaboratively.

The goal of collaborative testing is to foster an environment where different perspectives are valued, and responsible tech principles are upheld. By actively involving stakeholders from legal, ethics, and user experience domains, organizations can identify and mitigate potential ethical concerns early on, leading to the development of software that is not only functional but also aligns with ethical and societal standards.

Continuous learning

Continuous learning and improvement are essential components of responsible tech in testing. In a rapidly evolving technological landscape, testers must stay updated on emerging ethical challenges, best practices, and evolving regulations. By fostering a culture of continuous learning, organizations can adapt to changing requirements and enhance their responsible tech practices.

Staying informed about emerging ethical challenges is vital to address potential risks and ensure responsible software testing. New technologies, data privacy concerns, and societal expectations continually shape the ethical landscape of software development and testing. Testers should actively seek knowledge through resources such as research papers, industry publications, webinars, and conferences that cover topics related to responsible tech.

Additionally, understanding and following best practices in responsible tech testing is crucial. These practices encompass a wide range of considerations, including inclusivity, privacy, security, transparency, and fairness. Testers should continuously educate themselves on these practices and incorporate them into their testing strategies and processes.

Regulations surrounding technology and data protection are also subject to change. Organizations must keep abreast of relevant laws and regulations to ensure compliance and ethical conduct. This includes regulations like the General Data Protection Regulation (GDPR), California Consumer Privacy Act (CCPA), and emerging legislation that addresses ethical considerations in technology. By staying informed and incorporating regulatory requirements into testing practices, organizations can mitigate legal risks and demonstrate their commitment to responsible tech.

Fostering a learning mindset within the testers is equally important. Testers should be encouraged to explore new ideas, experiment with different approaches, and share their learnings with their peers. This can be achieved through regular knowledge-sharing sessions, workshops, and encouraging collaboration within the team. Creating an environment that values learning and improvement promotes responsible tech practices and ensures that the testers remain proactive in addressing ethical considerations.

Furthermore, organizations can leverage internal and external resources to facilitate continuous learning. They can invest in training programs, workshops, and certifications that focus on responsible tech in testing. Engaging external experts or consultants can also provide valuable insights and guidance to further enhance responsible tech practices within the organization.

In conclusion, continuous learning and improvement are fundamental to responsible tech in testing. By staying updated on emerging ethical challenges, following best practices, and complying with evolving regulations, organizations can adapt to changing requirements and ensure responsible software testing. Fostering a learning mindset within the testers promotes knowledge-sharing and collaboration, enabling the team to proactively address ethical considerations and contribute to the development of more responsible technology.

Transparency

Transparency is a crucial principle in responsible tech practices, including software testing. It refers to openness, visibility, and clear communication throughout the testing process. When transparency is not maintained in testing or product development, various adverse effects can occur, impacting the product, the organization, and the overall culture.

One real-world example of the consequences of lacking transparency is the Volkswagen emissions scandal. In this case, Volkswagen intentionally manipulated emissions test results for their diesel vehicles, deceiving regulators and the public. The lack of transparency in their testing practices led to severe reputational damage, legal consequences, and financial losses. This incident highlighted the importance of transparency in maintaining trust with stakeholders and ensuring ethical behavior throughout the testing process.

When transparency is compromised in testing, several adverse effects can emerge:

Lack of Accountability: Without transparency, it becomes challenging to identify who is responsible for specific testing decisions, actions, or errors. This lack of accountability can hinder the ability to rectify issues promptly and prevent recurrence.

Inadequate Risk Mitigation: Transparency is essential for identifying and addressing potential risks during testing. When transparency is lacking, risks may go unnoticed, leading to the release of products with unresolved vulnerabilities or flaws that could have significant consequences.

Limited Collaboration: Transparency fosters collaboration among team members, enabling effective communication, knowledge sharing, and collective problem-solving. Without transparency, collaboration may suffer, hindering the effectiveness and efficiency of the team.

Decreased Stakeholder Trust: Transparency is vital for building and maintaining trust with stakeholders, including users, customers, and regulators. Lack of transparency erodes trust, as stakeholders may perceive the organization as being secretive or unaccountable for their actions.

Negative Organizational Culture: Lack of transparency can contribute to a culture of secrecy, silos, and distrust within the organization. This can hamper innovation, hinder collaboration, and lead to diminished employee morale and engagement.

To address these challenges, organizations should prioritize transparency in testing by:

Providing access to information about the testing strategy, and test results to relevant stakeholders.

Communicating openly about any limitations, potential risks, or known issues in the software being tested.

Encouraging open dialogue, feedback, and knowledge sharing among team members.

Establishing mechanisms for reporting concerns, errors, or ethical dilemmas in testing without fear of retribution.

By embracing transparency in testing, organizations can build trust, foster collaboration, and ensure ethical practices, ultimately leading to the development of more responsible and reliable technology.

Conclusion

Implementing responsible tech principles in testing is crucial for creating a more ethical, accountable, and inclusive technological landscape. By adhering to ethical frameworks, ensuring data privacy, addressing biases, promoting inclusivity, and fostering transparency, testers play a vital role in shaping responsible technology. The principles discussed in this article provide a foundation for testers to approach their work with a commitment to ethical and responsible practices. As technology continues to advance, the integration of responsible tech in testing becomes increasingly important to mitigate potential harms and build a better future for all.